Achieving measurable returns from artificial intelligence investments has become an elusive goal for most organizations, with new research revealing that infrastructure complexity—not technological capability—stands as the primary obstacle to AI success. According to an exhaustive DDN study, 65% of organizations report their AI environments are too complex to manage effectively, creating a cascade of operational failures that undermine project viability.

Infrastructure complexity, not technological limitations, has emerged as the defining barrier preventing organizations from realizing returns on AI investments.

The impact of this complexity manifests in stark business outcomes. Over half of organizations have delayed or canceled AI initiatives in the past two years, with more than half of enterprise AI projects shelved entirely due to infrastructure challenges. Most alarmingly, MIT’s Project NANDA found that 95% of organizations see zero measurable return from their generative AI investments, while only 15% of AI decision-makers reported any EBITDA lift for their organizations. Centralized platforms that simplify connectivity can help reduce friction between systems and streamline operations by using pre-built connectors.

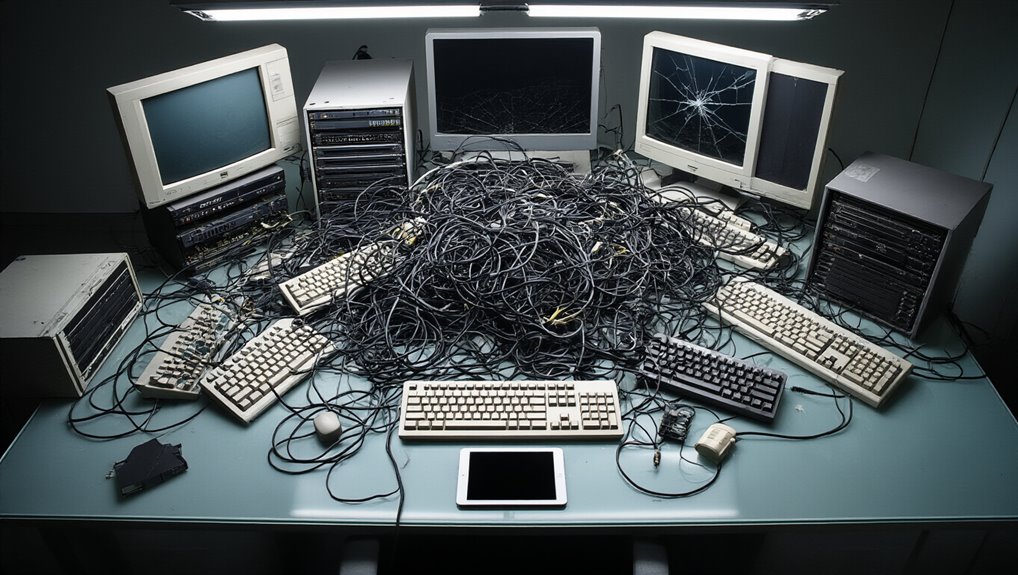

Infrastructure fragmentation lies at the heart of these failures. Organizations deploy workloads across disconnected patchworks of cloud and on-premises solutions, requiring continuous complex data movement between separate systems. Manual orchestration across disparate silos consumes operational resources while creating bottlenecks that prevent efficient scaling. Legacy infrastructure and siloed datasets affect 76% of leaders, with the data layer identified as the primary bottleneck rather than GPU or model capability.

Resource utilization adds another dimension to the crisis. Underutilized GPUs and rising power costs erode financial viability, with sluggish data pipelines preventing GPUs from maintaining full utilization rates. Nearly all organizations—93%—are actively seeking to reduce AI’s energy impact as unplanned energy requirements stall projects and consume potential ROI. Power and cooling costs represent the top infrastructure constraint for 47% of respondents, further limiting deployment options.

Workforce challenges compound these technical obstacles. Most internal teams—83%—struggle with AI workloads today, facing persistent skills gaps that prevent effective infrastructure management. Resource-intensive manual processes deplete limited skilled personnel who could otherwise drive innovation.

The situation grows more urgent as AI workloads are projected to grow 110% in the next year. Industry analysts predict this crisis will intensify, with Gartner forecasting that more than 40% of agentic AI projects will be canceled by the end of 2027. While 97% of organizations agree cloud infrastructure is essential to scaling AI, seamless integration between cloud and on-premises environments remains critically important for efficient data distribution and operational success.