Beneath the surface of gleaming AI announcements and ambitious pilot programs lies a troubling reality: 95% of generative AI experiments at companies fail to deliver any measurable impact on profit and loss statements. Only 5% of these initiatives achieve rapid revenue acceleration, while most stall despite the frantic rush to integrate new models. The problem isn’t regulation or model performance—it’s flawed integration and uncoordinated chaos.

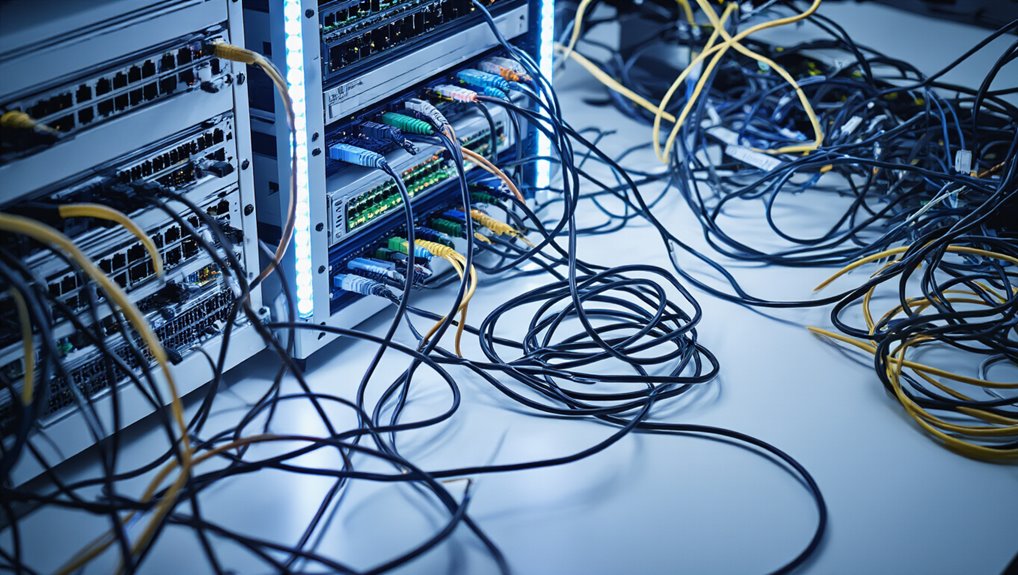

The problem isn’t regulation or model performance—it’s flawed integration and uncoordinated chaos holding enterprises back.

Generic tools like ChatGPT fail in enterprise environments because they don’t adapt to specific workflows. Tools that excel individually cannot learn from enterprise processes or coordinate with existing systems. This creates model drift, redundant development, and inconsistent outputs across teams. Knowledge remains trapped in silos, invisible to AI systems and slowing critical decision-making. Shadow AI usage proliferates as employees deploy unsanctioned tools without IT oversight, compounding the disorder. Implementing strong API integration practices can reduce data silos and improve system interoperability.

Resource misalignment makes the chaos worse. Companies allocate over half their generative AI budgets to sales and marketing tools, yet the highest ROI comes from back-office automation that reduces outsourcing and agency costs. Competing systems with misaligned goals pull organizations in different directions. Semantic inconsistencies between teams break data pipelines, while talent fragments across data, machine learning, and business units without coordination.

A striking perception gap separates executives from individual contributors. While 80% of VP and C-suite leaders rate AI-assisted work as high quality, only 28% of individual contributors agree. Moreover, 61% of executives value employees using AI compared to just 13% of ICs. Only 30% of employees can correctly identify AI-generated content, and 23% of ICs fail to disclose AI use versus 6% of executives.

The path forward requires abandoning chaotic experimentation in favor of strategic control. Forward-thinking enterprises design for alignment by deploying agentic AI systems that learn, remember, and act independently within defined boundaries. These intelligent agents sense workflow shifts and coordinate actions across systems while enforcing policies in real time. Companies achieve more reliable outcomes when they purchase specialized solutions rather than building proprietary systems internally. Organizations must adopt zero-copy data architectures that activate insights directly at the source without unnecessary data movement across hundreds of fragmented systems.

Companies must cultivate experimentation cultures with proper AI training, dispel myths, and focus on stabilizing volatility rather than glorifying uncontrolled pilots that produce minimal business value.